Most neoclouds today run like it's 2003.

One customer, one card. Maybe a MIG slice if you're lucky. The result? Up to 80% of every card goes unused. And in inference-heavy workloads, that number climbs even higher.

This model might work for demos.

But it falls apart at scale.

Service providers can’t justify the capex. Margins collapse. And elastic access, the thing cloud is supposed to deliver, never materialises.

This team is building a solution.

And it’s just like the one they built before.

Who are they?

The GPU Audio Companion Issue #59

Want the GPU breakdown without the reading? The Audio Companion does it for you, but only if you’re subscribed. If you can’t see it below, click here to fix that.

Company Background

Hosted.ai launched in 2024, but its story starts more than a decade earlier.

The founding team has been solving problems for service providers since 2010. The first solution was OnApp, a turnkey software platform that simplified IaaS market entry for service providers. How? By making it easy to build, monetise, and sell public or private cloud infrastructure.

Then, they built Sunlight.io, a software company that developed a high-performance hyperconverged virtualisation stack for service provider cloud and edge compute environments.

Both of these offerings served to reduce the cost and complexity of building the multi-tenant orchestration and monetisation layers for service providers. This meant more affordable cloud services for customers and healthier margins for the business.

Hosted.ai pursues the same fundamental mission today, but for the new world of GPU-enabled infrastructure:

Make it easy and profitable for any service provider to build and monetise GPU infrastructure for AI workloads.

What They Do

At its core, Hosted.ai’s software solves three problems:

Limited go-to-market paths - Most service providers are stuck choosing between two bad options: resell someone else’s GPUaaS for thin margins, or spend time and resource building their own platform from scratch.

GPU waste - Traditional passthrough models are inefficient, leaving most GPU capacity idle in the face of significant capex.

Missing monetisation tooling - Traditional IaaS platforms weren’t built for GPUaaS. Billing, metering, and orchestration, if they exist, are clunky bolt-ons.

Hosted.ai solves these problems by giving service providers a turnkey solution to turn any GPU into a revenue-generating, multi-tenant resource:

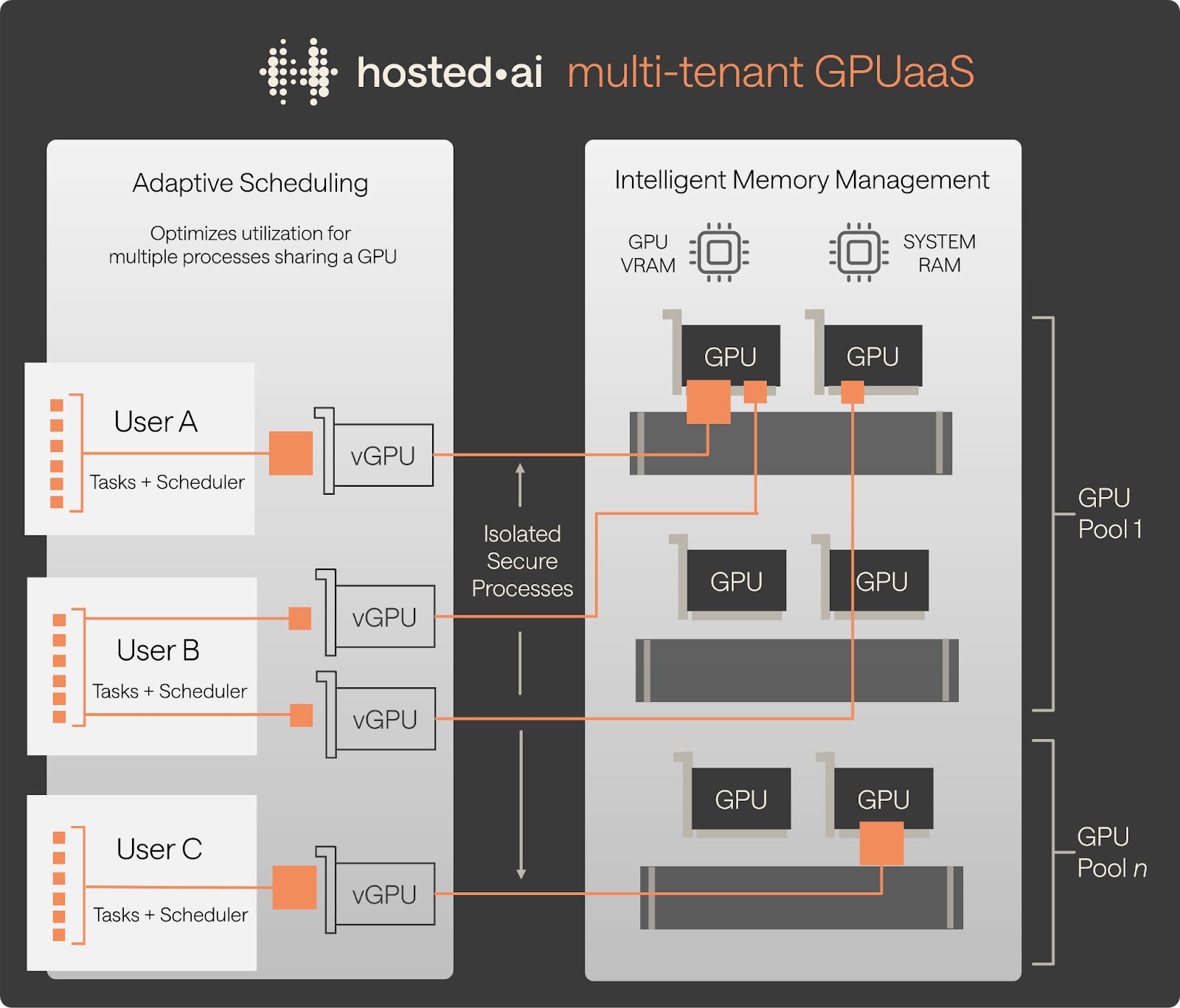

Full GPU virtualisation and overcommit, allowing utilisation to exceed 90%

Elastic provisioning for inference and training workloads

Self-service portals, billing integration, and orchestration out of the box

Compatibility with existing cloud stacks like OpenStack, VMware, ProxMox, and Virtuozzo

Same-day deployment with support for both GPU-only and full-stack HCI environments

Executive Team

Hosted.ai is led by a team that has built platforms serving thousands of providers across 100+ countries, and now they’re applying that experience to the GPU stack.

Ditlev Bredahl (CEO) - Serial founder with six startups and four exits, including OnApp. Vocal advocate of building in public and frequently shares insights with the wider cloud ecosystem.

Julian Chesterfield (CTO) - Former storage architect at XenSource (acquired by Citrix) and founder of Sunlight.io. Built the ultra-high-performance virtualisation engine that now powers Hosted.ai’s GPU abstraction layer.

Narendar Shankar (CCO) - Entrepreneur with 3 startup exits, including exits to Akamai and Accenture. Previously held senior roles at VMware, Nvidia, Cisco, and Expedia. Brings a mix of Service Provider GTM, startup execution, and deep technical understanding of AI infrastructure.

James Withall (COO) - Former CTO at OnApp and product lead at UK2 Group. Led IT and Alliances at Virtuozzo before joining Hosted.ai to scale operations and partner success.

The Edge

True vGPU, Not Just MIG - Hosted.ai virtualises memory and compute across multiple cards, pools them, and lets tenants schedule workloads just like vCPU. Dynamic memory management avoids contention, making it viable for shared inference and training alike.

Overcommit up to 10x - Unlock 2–10x overcommit ratios depending on the workload. This means providers can sell the same physical card multiple times, a practice standard in CPU clouds but rare in GPUaaS. The result: higher revenue per card and faster ROI.

Elastic Pricing and Flexible GTM - By decoupling consumption from hardware constraints, Hosted.ai enables more creative go-to-market models. Providers can offer burst pricing, short-term access, or dynamic tiering for inference-heavy apps. They can undercut hyperscalers, match them while preserving margin, or serve workloads traditional platforms don’t touch.

Full Stack, Turnkey - Hosted.ai ships with orchestration, self-service provisioning, billing integration, model libraries, CRM plugins, and more. That means faster go live, and a reduced time to revenue.

Hardware-Agnostic and xPU-Ready - The platform works across GPU vendors and is already integrating with alternative AI accelerators.

ROI You Can See - Hosted.ai’s ROI calculator at neocloud.tools make the business case clear. Most GPUs run at around 50% utilisation. With overcommit (and even dynamic pricing) enabled, providers can extract more value from the same hardware without adding new cards.

Recent Moves

Hosted.ai has moved quickly to validate its thesis and expand its capabilities:

Acquired Sunlight.io - Added deep virtualisation IP and a proven HCI stack, accelerating time to market and deepening the tech moat.

Raised $4.6M - Backed by strategic investors to grow the platform, fund GTM, and expand globally.

Launch traction - Launched at CloudFest ‘25, engaged in 40+ new customer PoC projects and deployments in Q2, won first multi-million dollar global customer contract in Q3 '25

Partnered with FuriosaAI - Added support for non-NVIDIA accelerators, preparing support for open silicon, positioning itself as a long-term abstraction layer for heterogeneous GPU/xPU infrastructure.

Shipped major features - Rolled out NVLink multi-GPU support, persistent storage billing, enhanced subscription controls, and secure isolation for multi-tenancy.

Scaled globally - Hired across sales, customer success, and engineering. Active deployments now span Europe, the Americas, Africa, and APAC.

What’s Next

Hosted.ai has already rebuilt GPU provider economics from the ground up, but the long game is just beginning.

The mission stays the same: make GPUaaS profitable, elastic, and accessible for every service provider. But the next phase pushes further into abstraction, orchestration, and silicon independence because the market is shifting.

Training is no longer the only game in town.

As foundation models stabilise and open weights proliferate, the infrastructure battle moves to fine-tuning, inference, and edge deployment.

These workloads don’t always demand the raw power of the latest and greatest hardware. What they need is density, flexibility, price–performance optimisation, and the ability to run reliably across mixed silicon environments.

This is where Hosted.ai’s platform advantage compounds.

And it’s where the roadmap is headed.

The next evolution of the scheduling engine is already underway. Hosted.ai is tuning it further to maximise GPU utilisation and reduce idle compute. That means smarter resource allocation, tighter memory management, and better responsiveness under mixed-tenant loads.

For end users, it translates into faster results at lower cost, and for GPU providers, it means higher revenue per card.

On the deployment side, Hosted.ai is expanding its support for pre-configured model environments.

These aren’t just container templates. They’re full-stack, production-ready setups for AI teams who want to get to inference or fine-tuning without building everything from scratch.

That means a smaller gap between GPU availability and usable, revenue-generating services.

Hardware support is also going broader.

While most GPUaaS platforms are locked into a single vendor, Hosted.ai is extending its platform to handle a growing list of alternative accelerators, abstracting the quirks, so service providers can slot in whichever hardware makes commercial sense.

All in the same control pane.

Finally, to tie it all together, Hosted.ai is working with GPU procurement and financing partners to make infrastructure deployment more accessible.

The idea is simple: combine flexible hardware access with high-efficiency software, and turn GPUs into elastic, metered services. With higher utilisation, streamlined automation, more choice, and no need for a multi-billion-dollar credit line.

It’s a bold play, and if their thesis is correct, hosted.ai has a real shot at disrupting the neocloud software market in the same way that neoclouds are disrupting the cloud market.

The only question is whether the market agrees.

And if the incumbents will allow themselves to be caught out again.